Medina

Introduction

Medina is a board game. For my project in my Programming python course at UC during Fall semester 2016, I decided to implement this board game and create an AI to play it. This project is an attempt to create the board game Medina and implement some sort of machine learning into the game. These goals were not met but the results still proved interesting. The game was fully implemented but a learning Neural Network was not implemented by the end of the project. I have gained a much better theoretical understanding of neural networks as a result of this project.

To find the source code of this project, go to the project page on github for more details. Below is the report I wrote on this project, to see the report as a PDF, click here. I translated the report to HTML and adjusted the style to fit my website design.

Table of Contents

- Introduction

- Table of Contents

- Usage

- The Game

- Design

- Objective

- Results

- Future Improvements

- References

- Libraries Used

- Development

Usage

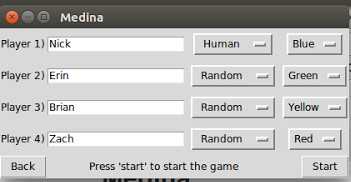

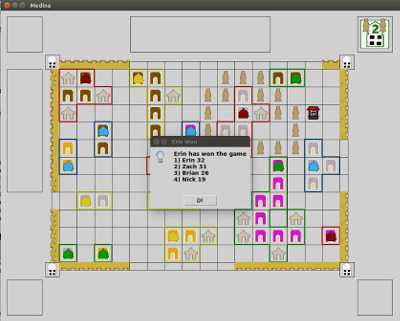

In order to use the project, python3 must be installed along with tkinter, tensor flow and numpy. To run the project, simply do the command “python3 Main.py”. Main.py is a script that will launch a small graphical display with a play game button. Pressing play game will load a panel where options can be selected to modify and set up a game. As of this writing, only human player or random computer player are the options. All human players will play on the same computer and a small dialog will appear informing players when it is his or her turn. Once a game is completed, it will announce the final scores. After the final scores are announced, the window can be closed and the application will return to the main window from the start.

The application does not explain how to play the game, this could be a future improvement. When playing the game, it does enforce the game”s rules. These rules can be found in the rules pdf for Medina.

Start Panel

Options panel

Game Screen

The Game

Media is a board game published by Stronghold Games ( more information here, official website for game) and designed by Stefan Dorra. I claim to have no ownership of the game and this project is only an attempt to use machine learning to play the game. The game is played by two to four players and the players all build a city together. While building the city, players can claim buildings for points. Buildings near the well, market or walls are worth more points than buildings not close to anything important in the city.

Picture of the game in Real Life

Image from Board Game Geek by Julian Pombo uploaded on 2015-08-03 image source

Each player is given a limited amount of resources and on each of their turns, players can build the city or claim a building; players can take a total of two actions on each of their turns. There are four different colors of buildings and each player can only claim one of each color. After every player has claimed a building of each color, the game ends.

A player can place any two or two of the same pieces from the following list on each of their turns:

- Buildings : can be used to start a new building or grow an existing, unclaimed building. Four colors of buildings: Grey, Brown Orange and Purple.

- Rooftops : can be used to claim an unclaimed building currently on the board. A player can only own one of each color building.

- Stables : can be attached to an existing claimed building and grows the building for purposes of ardency and scoring.

- Merchant : merchants build in a claim across the board and award extra points to buildings that the merchants are next to.

- Walls : walls are built around the edge of the board growing out from towers at the corners. Walls that are adjacent to buildings award extra points to the building for each wall touching the building.

While building, there are a few restrictions that players can utilize to take advantage of the current board and further their own score or hurt other players ability to play. For example, only one unclaimed building of each color can be built at a time and once a building is claimed it can only be extended by attaching stables.

Once the game has ended, the buildings each player has claimed scores based on the position and elements around the building (walls, the well, merchants and stables all give additional points). For the full rules and scoring of the game, reference the rulebook

Design

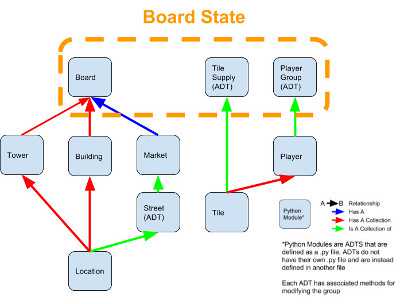

While working on the project, I made many design decision about concerning the organization and management of data. In order to do this, I followed a relatively consistent format. This format required me to make modules for each kind of element in the board game that could make an abstract data type (ADT) to represent the element. This ADT could then later be used to find information about the element. The game consisted of many different elements: a Market, a collection of buildings, tiles, players, a board, and so on… each of these ADT’s modules is found in the github repo. The documentation of each of these modules describes the purpose of the ADT and contains functions for interacting with the ADT.

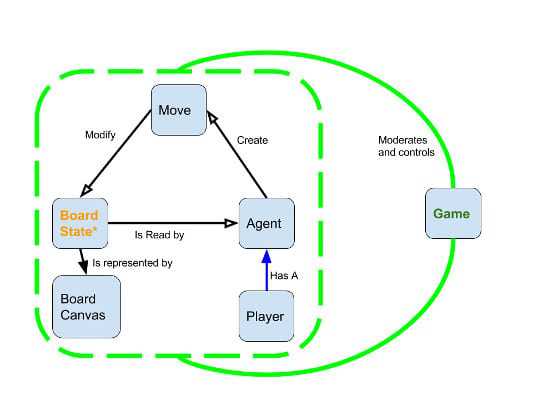

At a higher level, the game revolves around a board state. This board state is made up of three components, the players, a board, and the tile supply. This board state is modified by Moves. Moves are generated by Agents, and Agents are moderated by a Game. These interaction can be shown to the user via the BoardCanvas which is a class in the BoardCanvas.py module. Here are some diagrams that describe the relationships between these different aspects of the game. Again, each of these modules is described in detailed python documentation in their respective files on the Github Repo.

Diagram of the Definition of a Board State

Board State is an abstract definition so there is no ADT or module for Board State. In different parts of the project, modules will use a Board, Tile Supply and Collection of Players to represent the board State. This is shown in the Agent module and getting moves from players; the board state is passed as three parameters.

Diagram of how the Game is controlled from the Game.py file

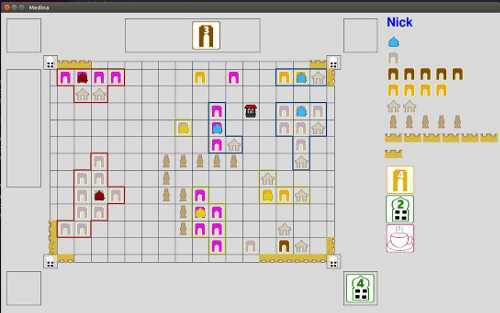

In Addition to making the game, there needed to be a display mechanism. This display is almost exclusively in the BoardCanvas Module in the BoardCanvas.py file. The Graphical components of this project were all implemented using tkinter in Python3. The Board Canvas takes a Board State and can render it on the screen. The HumanAgent module is responsible for rendering and making interactive parts of the board through use of the BoardCanvas Module. The board canvas uses the tkinter canvas module to draw images on the screen and create interactive elements that a player can use to modify the board state and make moves.

Example of the board canvas with a human agent.

The game uses a setup of agents. As defined in the documentation, “an agent is responsible for making a move decision based on a board. Players will make two moves (unless it is the first turn, in which case only one move is allowed) This module will have support for getting all possible moves (or ranges for all types of moves as there are many similar moves of the same type such as placing buildings or stables). An agent only needs to be the following function: make_decision(board, player_index, players, tile_supply, num_moves)”

As show in the diagram of the Game, an agent makes moves that modify the board state. A human agent is slightly different: a human will interact with the board canvas to make moves and has an intermediate step between the board state and the player”s actions.

Diagram of Human Agent design.

Objective

My objectives at the start of the project:

- Implement the game in Python with a GUI interface and allow players to play the game.

- Make the game a network game so multiple players could play the same game on different machines. Network game play is a lower objective.

- Add an AI to the game that utilizes machine learning and pattern recognition to make moves and become better at playing the game as time goes on.

- Give the AI the ability to watch and learn from records of games.

- Train the AI to the point in which it can consistently play the game and get a decent score.

- Possibly develop different versions of the AI that can play the game with different strategies (aggressive, risky, impatient) and difficulty.

Results

As can be clearly seen, these objectives were not met and only the game was implemented with no machine learning. Although there is no machine learning for medina, the format for higher level game playing can be implemented through use of the Agent Module. In the AIPractice Directory, there are many files dedicated to creating and managing a neural network. These use the same layout of an agent setup to implement a Neural Network that can play Tic Tac Toe. This was modeled from Daniel Slater’s Alpha Toe project in which he used the same machine learning used by Google’s Alpha Go to play the game Tic Tac Toe. This was planned to be implemented with Medina but I ran out of time. A person can play against the Tic Tac Toe neural network by running the AIPractice/tttAI.py script with python3. The Neural network will train against a random opponent by use of a policy gradient in the tttTrain.py script. Although only a random computer opponent was developed and tested by this time, the use of the Agent module allows for the easy creation of more competent opponents and even the creation of a Neural Network as shown by Tic Tac Toe.

Future Improvements

Some easy future improvements to this design would be implementing different kinds of opponents such as those implemented in Dainel Slater”s Alpha Toe project in the techniques directory. These techniques represent different kinds of machine learning and could be compared against each other or used to train a neural network. The kind of machine learning that the neural network would use is classified as Reinforcement Learning. Reinforcement learning is when the machine will adjust its decision making process based on the results of previous experiences. For example, if a neural network performs well in a game, the recorded moves will be weighted more favorably. The results obtained by Imran Ghory in his paper “Reinforcement Learning in Board Games” could be applied in more detail to Medina. Medina has an extremely complicated search space and one player only represents a fourth of the actions in the game so the challenge is mainly how can the player optimize their score given a limited amount of influence. The game has no random chance so it is difficult even for humans to play well. Medina is not as complicated as GO but still represents an interesting problem which is why I chose it for my project; it has an unlimited space for improvement and analysis.

References

Ghory, Imran. “Reinforcement learning in board games”. May 4, 2004. Autonomous Learning Laboratory. College of Information and Computer Sciences University of Massachusetts Amherst (source)

Lundh Fredrik. “An Introduction To Tkinter”. Effbot.org. 2005. (source)

“Python Tkinter Canvas”. Tutorials Point Simply Easy Learning. (source)

“Graphical User Interfaces with Tk” The Python Software Foundation. 2016. (source)

Slater Daniel. “Alpha Toe”. Github. http://www.danielslater.net/. Nov 6. 2016 (github repo, source)

Singh, Aarti. “Neural Networks”. Carnegie Mellon University School of Computer Science. 2010. (source)

Stergiou, Christos and Siganos, Dimitrios. “Neural Networks”. Imperial College London. 1998. (source)

Tensor Flow. Google Brain Team. 2016. (source)

Shipman, John W.. “Tkinter 8.5 reference: a GUI for Python”. New Mexico Tech Computer Center. www.nmt.ecu/tcc (source)

Libraries Used

Tkinter - for GUI elements Tkinter is usually installed with most distribution of python, to check if it is installed, open up the python3 interpreter and try the following commands.

import tkinter tkinter._test()

This should give a basic window that can be interacted with.

If it does not give a window, the command to install tkinter on Ubuntu is “sudo apt-get install python3-tk”, more specific information for each os to install tkinter can be found here

Tkinter will be used to make the GUI for the game.

NumPy - Mathematics Library

to install NumPy, use pip:

pip3 install numpy

NumPy will be useful to compute and do operations on the large amounts of numbers and math involved in analyzing a board game.

Tensor Flow - Neural Network Library

to install tensorflow, use pip:

pip3 install tensorflow

Tensorflow is used to make and read neural networks.

Development

The entire development of this project was recorded by the commit log on the github repository. This was almost exclusively developed by me besides the code from other projects. The commit log documents what code was modified when and by whom. During development, the first two weeks were attempting to develop the project with an object oriented interface. After this, the project was changed to an Abstract Data Type design for each element of the project. This was a large setback and caused other parts of the project to be cut and adjusted the scope of the project.